An AI tool going down mid-workday is like a fire alarm going off all afternoon. You can’t focus, you can’t ignore it, and there’s no actual fire. Just a building full of people standing in the parking lot wondering if they should go get coffee.

March 2, 2026: Claude.ai down for about fourteen hours. March 11: an OAuth bug locks Claude Code users out while the API itself is happily running. April 15: Opus 4.6 throwing errors across the chatbot, the CLI, and the API. Three big ones in six weeks, plus the smaller ones I’ve stopped counting.

I’m not panicking. We don’t run any LLMs in production at Victoria Garland. But I am annoyed, because the “team member” who was helping me build this morning just clocked out without telling anyone, and they took my context with them.

What 99.1% actually buys you

Claude’s API has been running at about 99.1% uptime over the last 90 days. That sounds great. It’s the kind of number you’d put on a slide.

It is, until you do the math. 99.1% over a quarter is roughly sixteen hours of degraded service. Sixteen hours a quarter that your most-loaded teammate, the one with all your context, all your half-formed plans, all your in-flight refactors, is unreachable.

Now scale that. 95% of developers report using AI coding tools at least weekly. 75% lean on them for more than half their actual coding. The average experienced dev runs 2.3 AI tools concurrently. We have, collectively and very quickly, made an entire profession’s productivity dependent on a handful of providers running in a handful of data centers.

We’re starting to find out what that costs.

Build-time vs. production AI

Here’s the distinction I keep coming back to, because it changes the whole conversation.

If you put an LLM in your production path (your checkout flow, your support chat, your search ranking), an outage is catastrophic. Customers see it. Revenue stops. The pager goes off and someone has to explain it to a stakeholder who didn’t know there was an LLM in there to begin with.

If you put an LLM in your build process (generating code, drafting copy, researching, writing tests), an outage is annoying. The fire alarm. You can stand in the parking lot for a few hours, you’ll probably survive.

We’re squarely in the second camp. AI is part of how we build, but the things we ship to clients don’t call out to a model in real time. Build, then share. That’s a for now rule, not a forever rule. For now, the math works. Build-time outages are a productivity tax, not a customer-facing incident.

The catch is that “productivity tax” is doing a lot of heavy lifting in that sentence.

The thing that actually hurts is in-flight context

When Claude blinks out at hour three of a session, the loss isn’t the API call that failed. The loss is the conversation behind it. The plan you talked through. The five files you’d already pulled in. The mental model you co-built with the tool, half of it sitting in the chat history and half of it sitting in your head.

You can switch to another model. I do. The problem is that the other model wasn’t there. It doesn’t know what you decided fifteen turns ago. It doesn’t know which approach you ruled out. You either re-explain everything (slow, lossy, irritating), or you start over and pretend the last two hours didn’t happen.

The pain scales with how multi-step the workflow is. Single-shot autocomplete? Trivial to swap. Copilot, Cursor, whatever, doesn’t matter. A long agentic session orchestrating multiple steps with ambiguous boundaries (is this a chat? is it a model generating something in the cloud? am I waiting on the agent or on a render?) is a different problem. Mid-stream is the worst place to lose your tool.

This is where I want software to catch up. I’d love a local transcript layer where the conversation isn’t owned by any single provider, so when one model goes dark, a different model can pick up the thread and continue. Maybe replay the last few turns to re-prime, then keep going. I’m guessing this exists in some form already. I haven’t had time to build it. If it doesn’t exist yet, somebody please ship it.

We can’t go to space with Claude (yet)

Here’s the bigger structural point, because it’s not really about Claude.

As a tool, the current generation of AI is excellent. I’d be a worse engineer without it. I’m not turning it off. But as a redundant, reliable system, the kind you’d build a real piece of infrastructure on, we’re seeing the flaws in real time. Three significant outages in six weeks isn’t a fluke. It’s the natural consequence of one company hitting #1 on the App Store while simultaneously serving a meaningful chunk of the global developer workforce. Consumer demand crushes the same infra that paid devs depend on. Welcome to the trade-off.

We can’t go to space with Claude yet. We can’t run the air traffic control system on Claude. We probably shouldn’t run our checkout on Claude either, unless we’ve thought very hard about the fallback path.

The fix isn’t “stop using Claude.” The fix is treating any single-provider AI dependency the way you’d treat any single point of failure. Have a backup model ready. Keep your prompts portable. Don’t let the multi-step workflow get so tangled with one provider’s quirks that switching costs you a day. Pay attention to where the LLM lives in your system. Build-time you can ride out, customer-facing you cannot.

And when the next outage comes, and it’s coming, don’t take it personally. Your teammate just clocked out. They’ll be back. Go take the dog for a walk.

Shameless plug: At Victoria Garland we build serious Shopify infrastructure for merchants who want their store to keep running whether or not the AI hype cycle is having a good day.

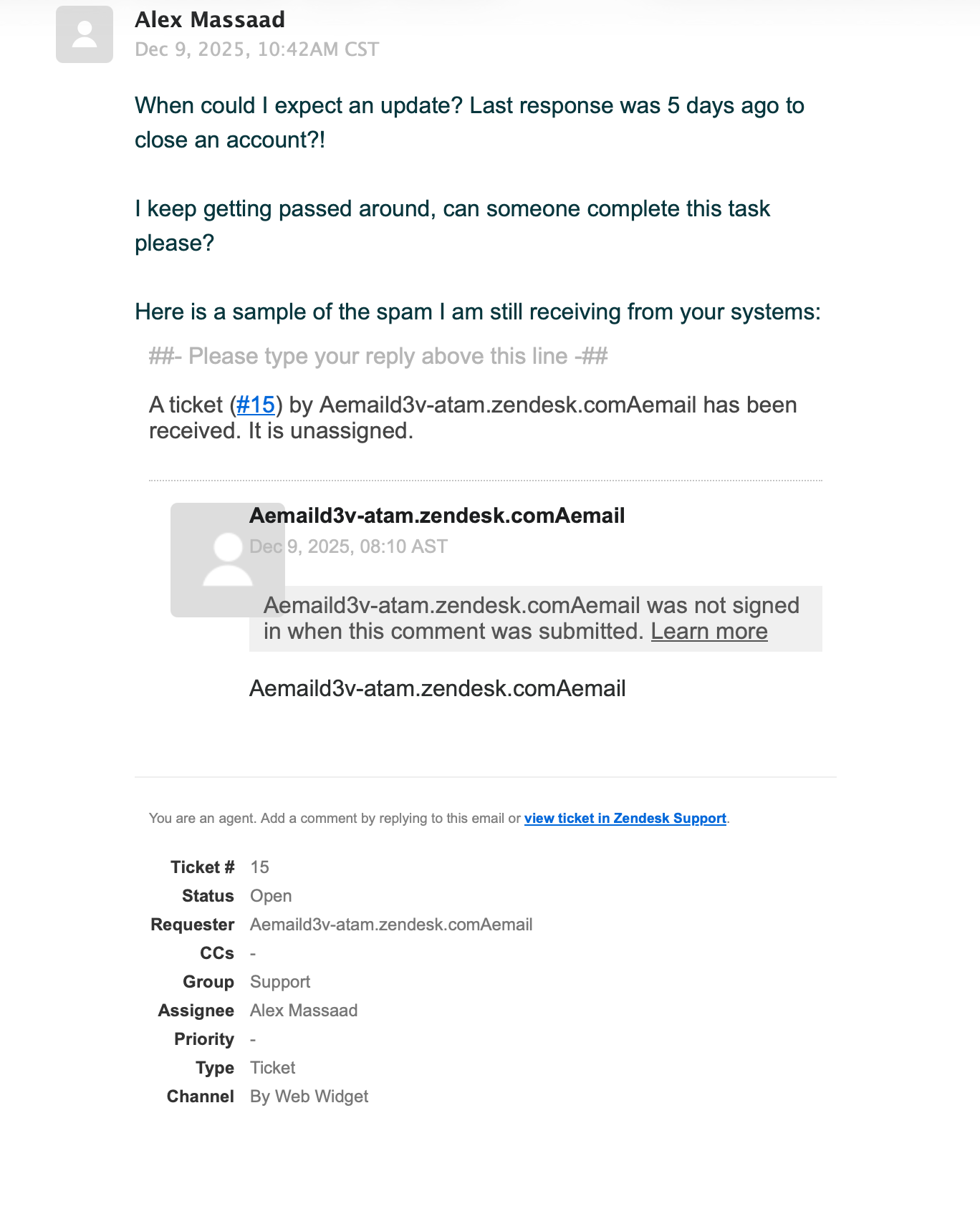

]]> The default Dashing dashboard. Shopify open-sourced this framework and every team ran their own version. It’s defunct now, but in 2013 these screens were everywhere.

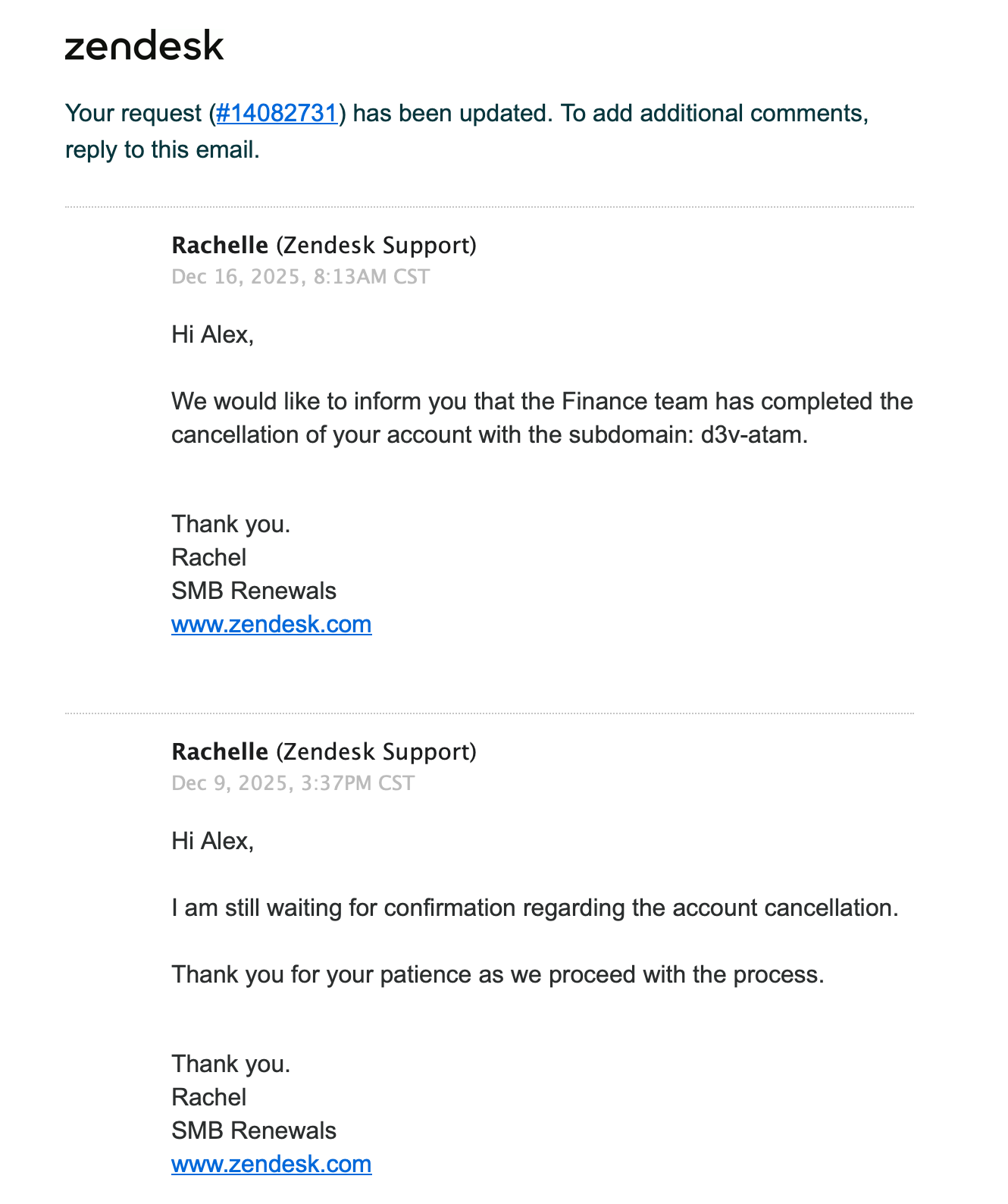

The default Dashing dashboard. Shopify open-sourced this framework and every team ran their own version. It’s defunct now, but in 2013 these screens were everywhere. My own dashboard. Hydro Ottawa usage graphs (apprently lagging badly!), cat gifs, weather and countdowns — if I could scrape it, it went on the screen.

My own dashboard. Hydro Ottawa usage graphs (apprently lagging badly!), cat gifs, weather and countdowns — if I could scrape it, it went on the screen. The Waterloo office mega dashboard. This thing was massive in the former distillery’s barrel-lined walls.

The Waterloo office mega dashboard. This thing was massive in the former distillery’s barrel-lined walls. The Montreal office dashboards. This is the best representation of what those screens actually looked like day-to-day: mounted on brick walls, always on, always watching.

The Montreal office dashboards. This is the best representation of what those screens actually looked like day-to-day: mounted on brick walls, always on, always watching.